You are navigating a rapidly evolving digital landscape, where the tides of cyber threats are rising, and a new, formidable predator has emerged: AI-powered phishing. This article serves as a critical warning and a guide, emphasizing the urgent need for robust team training to counter these sophisticated attacks.

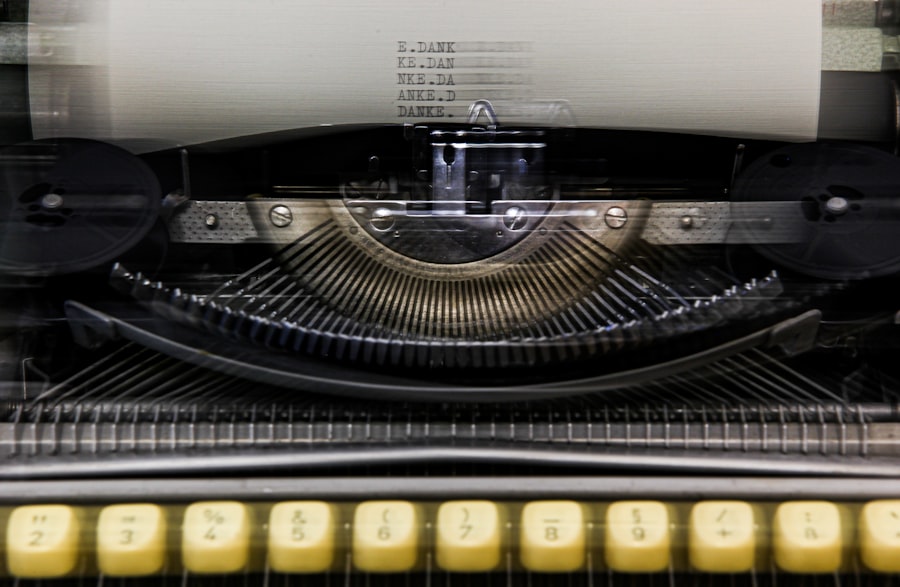

For years, phishing has been a persistent nuisance, a digital con artist attempting to trick you into revealing sensitive information. Originally, these attacks were often rudimentary, characterized by glaring grammatical errors, generic greetings, and suspicious-looking links. You could, with a discerning eye, often spot them from a mile away.

Early Phishing: The “Nigerian Prince” and its Legions

Remember the classic “Nigerian Prince” scam? You likely encountered variations of it: unsolicited emails promising vast fortunes in exchange for a small upfront fee or personal details. These were the digital equivalent of a hand-written sign on the back of a van, lacking professional polish but occasionally snagging unsuspecting victims.

Key characteristics of early phishing attacks included:

- Grammatical and Spelling Errors: A tell-tale sign of foreign origin or rushed composition.

- Generic Salutations: “Dear Customer” or “Valued Member” instead of your name.

- Obvious Urgency and Threats: “Your account will be suspended if you don’t act now!” designed to induce panic.

- Suspicious Sender Addresses: Email addresses that clearly didn’t belong to the purported organization.

- Unusual Formatting and Low-Resolution Logos: Indicative of a hastily created imitation.

These early forms relied heavily on human gullibility and a lack of digital literacy. You could often discern their fraudulent nature by simply applying a healthy dose of skepticism and a critical eye.

The Rise of Sophistication: Spear Phishing and Whaling

As digital literacy improved, attackers adapted. They moved beyond mass-broadcast, generic attacks to more targeted approaches. This marked the birth of spear phishing and whaling, where the attacker conducted reconnaissance to tailor their emails to specific individuals or high-value targets within your organization.

- Spear Phishing: Imagine a sniper, carefully choosing their target and crafting a personalized message. Spear phishing emails might reference your recent purchases, your role within the company, or even your personal interests, making them far more convincing. They often exploit public information available on social media or company websites.

- Whaling: This is spear phishing elevated to the executive suite. Whaling attacks target senior management – CEOs, CFOs, COOs – hoping to gain access to highly sensitive information or authorize significant financial transfers. These attacks are meticulously crafted, often impersonating other high-ranking executives or board members.

The key change here was the element of personalization. Attackers weren’t just casting a wide net; they were carefully selecting their prey and crafting bespoke lures. This required a greater degree of vigilance from you and your team.

The AI Infusion: A Game Changer in Deception

Now, you face an entirely new paradigm: AI-powered phishing. This isn’t just an incremental improvement; it’s a fundamental shift in the attacker’s capabilities. Artificial intelligence, particularly large language models (LLMs) like GPT-3.5 and GPT-4, are transforming how phishing attacks are conceptualized, crafted, and executed.

Imagine a highly skilled, tirelessly working con artist who can instantaneously learn from past mistakes, understand your communication style, and generate perfectly tailored, contextually relevant emails at scale. That’s the power AI brings to phishing.

Impact of AI on Phishing Crafting:

- Flawless Language and Grammar: AI eliminates the linguistic red flags common in older phishing attempts. It can generate perfectly written, natural-sounding English, or any other language, with accurate grammar, syntax, and tone.

- Hyper-Personalization at Scale: AI can analyze vast amounts of data to create highly personalized emails for thousands, even millions, of targets simultaneously. It can incorporate details about your role, projects, colleagues, and even personal interests, making the emails incredibly convincing.

- Mimicry of Communication Styles: AI can learn and replicate the writing style of individuals within your organization. Imagine an email from a “colleague” that not only sounds like them but uses their typical phrasing and humor. This blurs the line between legitimate and fraudulent communication.

- Generating Believable Scenarios: AI can invent plausible scenarios for urgency, requests, or information disclosure. It can craft compelling narratives that exploit human psychology, leveraging fear, curiosity, or a sense of duty.

The challenge for you and your team is therefore significantly amplified. The old rulebook for identifying phishing is rapidly becoming obsolete. You need a new strategy.

In the context of enhancing cybersecurity measures against the increasing threat of AI phishing attacks, it’s essential to consider how technology can improve overall business performance. A related article discusses the benefits of faster NVMe storage and its impact on business efficiency. By optimizing data access and processing speeds, companies can better implement security protocols and training programs for their teams. For more insights on this topic, you can read the article here: Boost Your Business with Faster NVMe Storage.

The Mechanics of AI-Powered Phishing: How it Works

Understanding the enemy’s tools and tactics is paramount. AI-powered phishing doesn’t rely on simple scripts; it leverages sophisticated algorithms and vast datasets to achieve its objectives. Think of it as a digital chameleon, capable of blending seamlessly into your digital environment.

Data Harvesting and Target Profiling

Before an AI crafts a single phishing email, it likely engages in extensive data harvesting. This process is analogous to a meticulous detective gathering every available scrap of information about a potential victim.

- Open-Source Intelligence (OSINT): AI systems can crawl public websites, social media platforms (LinkedIn, Facebook, X/Twitter), company websites, news articles, and even publicly available court documents. This provides a wealth of information about individuals, their roles, colleagues, projects, and even personal details.

- Breached Data and Dark Web: Unfortunately, past data breaches have made vast quantities of personal and professional information available on the dark web. AI can analyze this compromised data to build incredibly detailed profiles, including email addresses, phone numbers, past passwords, and even personal interests.

- Company Specific Data: AI can analyze publicly available information about your company – its organizational structure, recent announcements, upcoming projects, and key personnel – to understand its internal dynamics and identify potential vulnerabilities.

From this data, robust target profiles are built. These profiles inform the AI about the most effective vectors, the most believable impersonations, and the most persuasive narratives for each individual.

Content Generation and Impersonation

This is where the power of LLMs truly shines. Once a profile is created, the AI then proceeds to generate the content of the phishing attempt.

- Email Content Generation: AI can craft compelling email bodies that are grammatically flawless, contextually relevant, and designed to elicit a specific action. It can mimic the tone of a CEO, a service provider, or a trusted colleague. The AI can dynamically adjust its language and style based on the target’s profile.

- Voice Phishing (Vishing) Scripts: AI can generate scripts for phone calls that sound authentic and urgent. It can even synthesize voices that closely resemble individuals, adding another layer of deception. Imagine receiving a call from what sounds exactly like your CEO, requesting an urgent financial transfer.

- Deepfake Creation (Visual Phishing): While more complex and less common for broad phishing campaigns, AI is capable of generating deepfake videos or images. These can be used in highly targeted “whaling” attacks to create a fabricated video call or a convincing image of someone requesting sensitive information.

- Website/Login Page Cloning: AI can rapidly analyze and replicate legitimate websites, creating perfect clones of login pages. These fake pages are indistinguishable from the real thing to the untrained eye, tricking you into entering your credentials.

The capacity for AI to produce diverse and convincing attack vectors across multiple mediums significantly expands the attacker’s arsenal.

Behavioral Manipulation and Social Engineering

AI excels at exploiting human psychology. It’s not just about generating convincing text; it’s about understanding how to make you react.

- Urgency and Fear: AI algorithms can identify scenarios that naturally induce urgency (e.g., “account suspended,” “unusual login activity,” “payment overdue”) and craft messages that leverage these anxieties.

- Authority and Compliance: By impersonating figures of authority (CEO, IT administrator, government official), AI-generated messages can compel recipients to comply with requests without critical thought.

- Curiosity and Trust: AI can create messages that pique your curiosity (e.g., “new company policy,” “important document shared,” “unusual activity on your social media”) or build false trust by referencing shared context or personal details.

- Exploiting Current Events: AI can rapidly integrate current events, news cycles, and trending topics into its phishing campaigns, making them appear highly relevant and timely. For example, during a global health crisis, AI could generate phishing emails related to vaccine appointments or stimulus payments.

The AI analyzes your likely vulnerabilities and designs its attack to exploit them with precision. It’s a digital puppet master, pulling on the strings of human emotion and cognitive biases.

Key Indicators: The Fading Red Flags of Phishing

You might be thinking you know how to spot a phishing email. You’ve been taught to look for specific red flags. However, with AI in the attacker’s corner, many of these traditional indicators are effectively neutralized. The old “rules of thumb” are becoming dangerously unreliable.

The Demise of Grammatical Errors and Poor Formatting

Historically, the easiest way to spot a phishing email was its poor grammar, spelling mistakes, and amateurish formatting. These were the hallmarks of quick, unsophisticated attacks.

- AI’s Linguistic Precision: Large Language Models (LLMs) are trained on vast corpora of text, making them expert wordsmiths. They can generate perfectly grammatical and idiomatically correct English (or any other language). This means you will no longer see “kindly” used incorrectly or awkward sentence structures.

- Professional Formatting: AI can also ensure that emails adhere to professional formatting standards. It can mimic corporate email templates, use correct branding, and ensure consistent fonts and spacing, making the message appear legitimate.

Reliance on these visual and linguistic cues is now a dangerous gamble. The message you receive might be impeccably written and formatted, yet still be entirely malicious.

Hyper-Personalization: A Double-Edged Sword

While personalization was once a sign of a more advanced, targeted attack, AI takes this to an unprecedented level.

- Contextual Accuracy: AI can craft emails that are not just addressed to you by name, but that reference your specific projects, recent conversations (if data has been breached), your role, or even your personal interests. This deep personalization makes the message feel incredibly legitimate and difficult to distrust.

- Mimicking Known Contacts: Perhaps most alarmingly, AI can analyze communication patterns and style to impersonate your colleagues, superiors, or trusted vendors with chilling accuracy. The email might even adopt their unique phrases or jargon. This blurs the line between a genuine internal communication and a sophisticated impersonation.

The very elements that make a message feel relevant and trustworthy are now being weaponized by AI. Trusting a personalized email without further verification is a significant risk.

The Authenticity of Links and Attachments (Visual Deception)

Even the visual appearance of links and attachments is no longer a reliable indicator.

- Sophisticated Link Disguises: While direct inspection of a URL (hovering over it without clicking) remains a vital step, AI can generate highly plausible-looking domain names that are just one or two characters off from legitimate ones (typosquatting). Furthermore, some phishing sites use clever redirection or exploit vulnerabilities that make the visible URL appear correct.

- Realistic Attachment Names: AI can generate attachment names that are perfectly in line with corporate documents or expected files. “Q3_Report_Final.pdf” or “Invoice_123456.xlsx” are now generated with perfect contextual relevance.

- Brand Impersonation: AI-generated images and branding can perfectly replicate those of your company or trusted third parties. The logos, color schemes, and visual elements within the email and on linked landing pages can be indistinguishable from the real thing.

The traditional “red flags” are fading into the background, leaving you and your team increasingly vulnerable if you rely on outdated detection methods. A new mindset and comprehensive training are critically required.

The Urgency of Training: Why “Good Enough” is No Longer Enough

The threat landscape dictates an immediate and profound shift in your approach to cybersecurity education. Complacency is the silent killer, and in the age of AI phishing, “good enough” training is tantamount to no training at all. You are in a digital arms race, and your team needs to be equipped with the most advanced defense tactics.

The Human Element: Still the Weakest Link

Despite layers of technological defenses – firewalls, email filters, antivirus software – the human operating at the keyboard remains the primary target. You are the ultimate gatekeeper, and your decisions are continuously tested by adversaries employing increasingly sophisticated methods.

- Cognitive Biases: Humans are inherently prone to cognitive biases: the tendency to react quickly under pressure, to trust authority, or to be swayed by curiosity. AI-powered phishing campaigns are expertly crafted to exploit these innate psychological vulnerabilities.

- Information Overload: You and your team are inundated with information daily. This constant stream can lead to fatigue, reducing vigilance and making you more susceptible to persuasive phishing attempts that stand out from the noise.

- Lack of Real-World Experience: If your team hasn’t encountered sophisticated AI-powered phishing simulations, they might be overconfident in their ability to detect attacks based on outdated knowledge. Theoretical understanding pales in comparison to practical exposure.

No matter how robust your technical infrastructure, a single click by a well-meaning but ill-informed employee can compromise your entire organization. Your team is your last line of defense, and it must be fortified.

Bridging the Knowledge Gap: From Awareness to Action

Traditional security awareness training often falls short. It might explain what phishing is, but it rarely translates knowledge into actionable, reflexive behaviors needed to counter AI’s advanced tactics.

- Beyond Checklists: You can no longer rely on simple checklists of “red flags.” The red flags are gone. Training needs to focus on critical thinking, verification processes, and understanding the motivation behind a suspicious communication.

- Scenario-Based Learning: Your team needs to be exposed to realistic, AI-generated phishing scenarios. These simulations should mimic actual threats, including voice phishing, SMS phishing (smishing), and sophisticated email impersonations. This builds muscle memory for proper response.

- Understanding the “Why”: Employees often forget facts, but they remember stories and consequences. Training should explain why vigilance is crucial, illustrating the potential impact of a successful phishing attack on the individual, the team, and the entire organization.

The goal isn’t just to make people aware; it’s to embed a security-first mindset that leads to concrete, consistent actions when confronted with potential threats.

Regulatory Compliance and Reputation Protection

Beyond the immediate threat of data breaches and financial loss, inadequate security training carries significant regulatory and reputational risks.

- GDPR, CCPA, HIPAA, etc.: Many data protection regulations mandate that organizations take appropriate technical and organizational measures to protect sensitive data. Lacking effective cybersecurity training can be seen as a failure to meet these requirements, leading to hefty fines.

- Client Trust and Brand Damage: A successful phishing attack, particularly one that leads to a data breach, can severely damage your company’s reputation. Clients and partners may lose trust, leading to financial losses and long-term erosion of your brand.

- Competitive Disadvantage: In an increasingly interconnected world, security posture is a competitive differentiator. Companies with a history of breaches or a reputation for lax security will struggle to attract and retain talent and clients.

Proactive, comprehensive training isn’t just good practice; it’s a fundamental requirement for operating securely and responsibly in the digital age. It protects your bottom line, your legal standing, and your most valuable asset: your reputation.

In the evolving landscape of cybersecurity, understanding the implications of AI-driven threats is crucial for organizations. A related article discusses the benefits of hybrid hosting solutions, which can enhance your infrastructure’s resilience against such attacks. By integrating local support with robust technology, businesses can better safeguard their data and improve their overall security posture. For more insights on this topic, you can read the article on hybrid hosting here.

Building a Resilient Shield: Comprehensive Training Strategies

| Metric | Data/Value | Description |

|---|---|---|

| Increase in AI Phishing Attacks (Year-over-Year) | 300% | Growth rate of AI-powered phishing attacks reported in the last 12 months |

| Average Time to Detect AI Phishing Attack | 5 hours | Average time organizations take to identify an AI phishing attempt |

| Employee Phishing Simulation Failure Rate | 27% | Percentage of employees who failed simulated phishing tests before training |

| Employee Phishing Simulation Failure Rate (Post-Training) | 8% | Percentage of employees who failed simulated phishing tests after training |

| Percentage of Organizations Offering AI Phishing Training | 65% | Organizations that have implemented specific training against AI phishing threats |

| Reduction in Successful Phishing Attacks After Training | 70% | Decrease in successful phishing incidents following employee training programs |

| Common AI Phishing Techniques | Deepfake Emails, Personalized Spear Phishing, Automated Social Engineering | Most prevalent AI-driven phishing methods used by attackers |

To effectively counter the sophisticated threat of AI-powered phishing, you need to implement a multi-faceted and continuous training program. This isn’t a one-time event; it’s an ongoing commitment to fostering a culture of cybersecurity within your organization. Think of it as continuously sharpening a sword – it must be maintained to remain effective.

Implement Continuous Security Awareness Programs

A single annual training session is insufficient. The threat landscape changes daily, and your team’s knowledge needs to evolve with it.

- Regular Microlearning Modules: Introduce short, focused training modules throughout the year. These can cover specific tactics (e.g., vishing, smishing, QR code phishing), new trends in AI-powered attacks, or common social engineering techniques.

- Interactive Workshops: Organize hands-on workshops where employees can practice identifying and reporting suspicious communications in a safe environment. This fosters active learning rather than passive observation.

- Internal Communication Campaigns: Utilize internal newsletters, intranet announcements, and team meetings to reinforce cybersecurity best practices. Share real-world examples (anonymized, of course) of phishing attempts detected within the organization.

The goal is to keep cybersecurity top-of-mind, making vigilance an ingrained habit rather than an occasional thought.

Leverage AI-Powered Phishing Simulations

To fight AI, you must use AI. Modern security training platforms now incorporate AI to create hyper-realistic and adaptive phishing simulations.

- Tailored Scenarios: Use tools that generate phishing emails, texts, and even voice calls personalized to individuals or teams within your organization, based on their roles, public information, and even their communication style.

- Adaptive Learning Paths: Implement systems that adjust the difficulty and type of simulations based on an individual’s performance. Those who frequently fall for simulations might receive more frequent or beginner-level training, while those who excel can be challenged with more complex scenarios.

- Post-Click Training: If an employee clicks on a simulated phishing link, immediate, contextual training should be provided. This “teachable moment” is incredibly effective at reinforcing correct behavior.

- Reporting and Analytics: Utilize platforms that provide detailed reports on team-wide performance, areas of weakness, and individual progress. This data helps you identify patterns, measure the effectiveness of your training, and target specific vulnerabilities.

These simulations are invaluable for building practical experience and testing your team’s readiness in a controlled environment.

Foster a Culture of Reporting and Verification

Even the best training won’t catch every sophisticated AI attack. Your human element needs to be empowered to act as the first line of defense through diligent reporting and verification.

- Clear Reporting Channels: Establish and clearly communicate a simple, unambiguous process for reporting suspicious emails, texts, or calls. This could be a dedicated email alias, an internal chat channel, or a specific button in your email client.

- No Blame Policy: Crucially, implement a “no blame” policy for reporting. Employees must feel safe reporting a potential threat, even if it turns out to be benign. The fear of reprimand can lead to silence, which is far more dangerous.

- Emphasize Verification: Train everyone to verify unusual requests, especially those related to financial transactions or sensitive data, through an alternative channel. If you receive an email from the CEO requesting a wire transfer, do not reply to that email. Instead, call them on their known phone number or physically walk to their office.

- Security Champions Program: Identify and empower “security champions” within different departments. These individuals can act as first points of contact for questions, help reinforce best practices, and model secure behavior.

Empowering your team to actively participate in your security posture transforms them from potential weak links into an active defense network.

Equip Your Team with the Right Tools (Beyond Training)

While training is paramount, it must be supported by the right technological safeguards. Your team’s human vigilance is complemented by automated defenses.

- Advanced Email Security Gateways: Implement and regularly update email security solutions that leverage AI and machine learning to detect and block sophisticated phishing attempts before they reach your team’s inboxes.

- Multi-Factor Authentication (MFA): Enforce MFA across all critical systems and applications. Even if credentials are compromised via a phishing attack, MFA acts as a vital secondary barrier.

- Endpoint Detection and Response (EDR): Deploy EDR solutions that monitor endpoints (computers, mobile devices) for suspicious activity, allowing for rapid detection and response even if a phishing attack bypasses initial defenses.

- Secure Browsing Extensions/Plugins: Implement browser extensions that warn about suspicious websites or dangerous links.

- Password Managers: Encourage or enforce the use of secure password managers to generate and store unique, strong passwords, reducing the impact of credential-stuffing attacks if one password is leaked.

Training and technology are two sides of the same coin. One is largely ineffective without the other. You need both a well-trained, alert workforce and robust technological infrastructure to create a formidable defense against AI-powered threats.

In conclusion, you are facing an adversary of unprecedented adaptability and sophistication. The era of easily identifiable phishing attacks is over. AI has redefined the threat, making it more personal, more convincing, and far more pervasive. Your defense must evolve in tandem. Comprehensive, continuous, and AI-informed training is no longer an optional add-on; it is a fundamental pillar of your organizational security. Invest in your team’s knowledge and vigilance, for they are your most critical defense in this new digital battlefield.

FAQs

What are AI phishing attacks?

AI phishing attacks are cyberattacks that use artificial intelligence technologies to create more convincing and sophisticated phishing messages. These attacks often involve automated generation of emails or messages that mimic legitimate communication, making it harder for recipients to detect fraud.

Why are AI phishing attacks becoming more common?

AI phishing attacks are increasing due to advancements in AI and machine learning, which enable attackers to craft highly personalized and believable phishing content at scale. This makes it easier for cybercriminals to target individuals and organizations effectively.

How can organizations train their teams to recognize AI phishing attacks?

Organizations can train their teams by conducting regular cybersecurity awareness sessions, using simulated phishing exercises, educating employees about the latest phishing tactics, and encouraging vigilance when handling unexpected or suspicious communications.

What are some key signs of an AI-generated phishing email?

Signs include unusual sender addresses, generic greetings, urgent or threatening language, unexpected attachments or links, and inconsistencies in tone or style. AI-generated messages may also contain subtle errors or lack context that a genuine sender would include.

What steps should employees take if they suspect an AI phishing attack?

Employees should avoid clicking on any links or downloading attachments, report the suspicious message to their IT or security team immediately, and follow organizational protocols for handling potential phishing attempts to minimize risk.

Add comment